Authentication implementation for standalone SPA (without a dedicated backend server, see image below) would always have to go through a scenario "Where to store the access token? "on successful authentication and token exchange with the identity provider.

Typically, we are forced to choose either browser storage or browser cookie in such scenarios. The beauty is both are open to vulnerable and it's up to developers to decide which has higher security countermeasures in our application which makes less vulnerable than the other. Period!

If we google to get an answer from experts, we will end up getting a mixed answer. since both options have their pros and cons. This section discusses the pros and cons of both options and the hybrid approach which I recently implemented in one of our application.

On a high level,

if we proceed with browser storage - we open a window for XSS attacks and mitigation implementation.

if we proceed with browser cookies - we open a window for CSRF attacks and mitigation implementation.

In detail,

Storing

Access token in browser storage:

Assuming our application authenticates the user from backend AUTH REST service and gets Access token in response and stores in browser local storage to do authorized activities.

Pros:

- With powerful Angular framework default

protection of untrusting all values before sanitizing it, XSS attacks are much

easier to deal with compared to XSRF.

- As like a cookie, local storage information is NOT

being carried in all requests (default behavior of browser for cookies) and

local storage by default has same-origin protection.

- RBAC on the UI side can be implemented without much

effort since access token with permission details are still be accessible by

Angular code.

- There is no limit for Access token size (cookie

has a limit of ONLY 4KB), it may be problematic if you have many claims and user

permission are attached to the token.

Cons:

- In case an XSS attack happened, a hacker can steal

the token and do unauthorized activities using a valid access token

impersonating the user.

- Extra effort is might be required for the developer to

implement an HTTP interceptor for adding bearer token in HTTP requests.

Storing

Access token in a "browser cookie"

Assuming our application authenticates the user from backend

AUTH REST service and gets Access token in response and stores in a browser cookie

(as HTTP only cookie) to do authorized activities.

Pros:

- As it’s an HTTP-only cookie, XSS attacks cannot

succeed in injecting scripts to steal token. Gives good prevention for XSS attacks stealing

access token

- No extra effort is required to pass access token as a bearer in each request. since as default browser behavior cookies will be passed

in each request.

Cons:

- Extra effort needs to be taken to prevent CSRF

attacks. Though Same Site cookie and Same Origin headers checking gives CSRF

prevention, still OWSAP standards recommend having this only as a secondary

defense. NOT recommending considering as primary defense since it’s still can

be bypassed by section https://tools.ietf.org/html/draft-ietf-httpbis-rfc6265bis-02#section-5.3.7.1

- Extra effort to implement XSRF /Anti forgery

token implementation and validation. (If backend services are still vulnerable

for Form action requests). and, need to have an HTTP interceptor in Angular client

to add XSRF token in the request header.

- Max cookie size supported is 4 KB, it may be

problematic if you have many claims and user permission is attached to the

token.

- As a default browser behavior access token

cookie are being carried automatically in all requests, this is always an open

risk if any misconfiguration in allowed origins.

- XSS attack vulnerability can be used still to defeat all CSRF mitigation techniques available.

·

Storing

Access token in Hybrid approach:

For a scenario like Oauth2.0 flow integration for SPA client (either

“Implicit grant flow” or Auth code with PKCE extension flow”) after user authentication

and token exchange, the respective identity providers (ex: identityserver 4,

Azure AD B2C, ForgeRock..etc) would return access token as an HTTP response, it

won’t set access token as response header as a cookie. This is the default behavior

of all identity providers for public clients “implicit flow” or “Auth code + PKCE

flow” since Access token can NOT be in a cookie in server-side, enabling “Same-site”

or “HTTP-Only” properties are not possible. These properties can be set only

from the server-side.

For the scenarios like above, the only way to store access

token is either browser local storage or session storage. But if we store

access token and your application is vulnerable to an XSS attack then we are

at risk of hackers would steal the token from local storage and impersonating that

valid user permissions.

Considering above mentioned possible threats. I would recommend

having a Hybrid approach for better protection from XSS and XSRF attacks.

“Continue storing access token in local storage but as secondary protection or defense-in-depth protection have session fingerprint

check. This session fingerprint should be stored as an HTTP Only cookie which XSS

could not tamper it. While validating

the access token in the Authorization header, also validate the session fingerprint

HTTP only cookie. If both Access token and session fingerprint HTTP only cookie

are valid then pass the requests as valid, if HTTP only cookie is missing then

make the request invalid and return Unauthorized.

In this way, even if an XSS attack happened, the hacker stole a token

from local storage but still, a hacker can not succeed in doing unauthorized activities.

since the secondary defense of checking referenced HTTP only auth cookie hacker

would not get in XSS attacks. we are

much protected now!

I would recommend the above Hybrid approach only for the scenarios

you have only having a choice of storing access token in local storage or session

storage.

But, in case your application has the possibility of setting

access token in the cookie at server-side after success full authentication. with “HTTP

Only”,” Same-site=Lax”,” Secure Cookie” are enabled still I would recommend

storing access token in a cookie with below open risks.

- As per OWSAP standards, “same-site” cookie and

“same-origin/header” checks are only considered as a secondary defense. XSRF

token-based mitigation is to be recommended as “primary defense” which again requires

developer efforts in each module to implement XSRF token in HTTP interceptor. or as an alternative, you are giving proper justification

to live with the open vulnerability of having only “secondary defense” as CSRF

protection.

- If none of our GET APIs are not "State

changing requests", the developer not violating the section: https://www.w3.org/Protocols/rfc2616/rfc2616-sec9.html#sec9.1.1

- if we don’t foresee, our token size won’t reach

4KB in the future. The current size is ~2KB.

- If Samesite=strict applied, it would impact the

application behavior since it would block cookie passed in top-level

navigation requests too.

- If None of our backend services supports

[FromQuery] and [FromForm] data binding.

- Teams are justified to live with the “Cons” of

browser cookie explained in the above section.

Conclusion

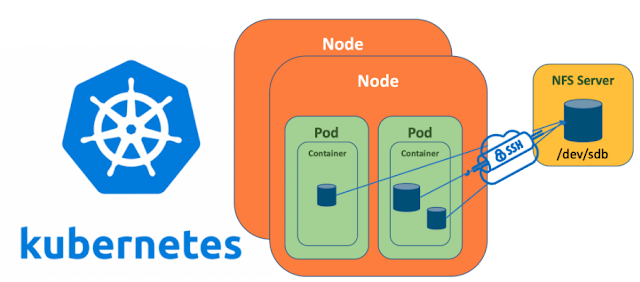

The debate of choosing whether browser storage or browser cookie would continue unless our SPA design has a dedicated backend server that would store the access token in the server in HTTP context and NOT at all expose the access token to the browser.

Until then, it's up to developers to decide in our application which browser storage mechanism has more multi-layered (primary and depth in deep defense) protection than others, which makes it less vulnerable to others. The decision behind continuing with browser storage is explained above and the possibilities of storing in browser cookie with open risks are mentioned above.